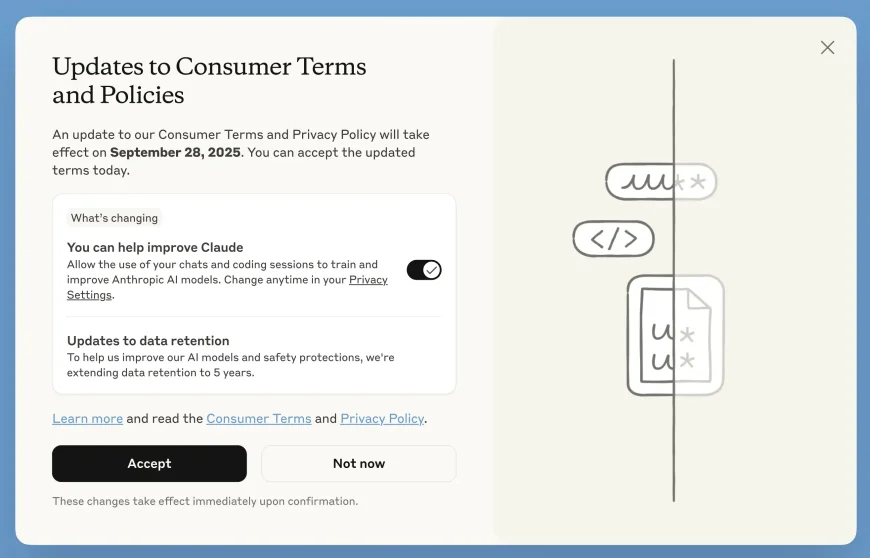

Claude Users Can Now Choose to Share Data to Improve AI

Anthropic’s latest update to Claude gives users more control over how their data is used for model improvement—while extending data retention for those who opt in.

Anthropic has rolled out updates to its Consumer Terms and Privacy Policy for Claude Free, Pro, and Max users. Users can now choose to allow their chats and coding sessions to be used to improve Claude models. This is an opt-in setting and can be adjusted at any time through Privacy Settings. New users will see the option during sign-up, while current users will get an in-app prompt.

Only new or resumed conversations and coding sessions will be included if permission is granted. According to Anthropic, «by participating, you’ll help us improve model safety, making our systems... less likely to flag harmless conversations». The setting won’t affect old chats or apply to Claude for Work, Gov, Education, or API access via partners like Amazon Bedrock or Google Cloud.

If users opt in, the data retention period will increase from 30 days to five years. Deleted chats won’t be used for training. The extended period supports safer and more consistent model development. Data from feedback on Claude’s responses will also be kept for five years under this setting.

Anthropic emphasizes it does not sell data and uses automated tools to mask sensitive information. The new terms take effect once accepted, and users must make their choice by September 28, 2025, to continue using Claude.

User feedback is mixed—some appreciate the transparency and control, while others question the five-year retention period.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0